Claude Opus 4.5 paired with Haiku 4.5 subagents hit 87% on SWE-bench Verified. The same Opus 4.5 running alone scored 74.8%.

That's a 12.2 percentage point gap. Same model generation. Same weights. Different harness configuration.

If you've been reading benchmark comparisons and drawing conclusions about model quality, that number should stop you cold. The 12-point improvement wasn't from a better model. It came from a harness that the model was specifically trained to work inside. And that training loop is one of the least-discussed dynamics in AI infrastructure right now.

This post is about that loop. It's part of the Agent Harnesses series, which covers the infrastructure layer that actually runs your AI. This one is about what happens when the model and the harness are trained together, why that creates compounding advantages for providers, and what it means for teams making serious platform decisions today.

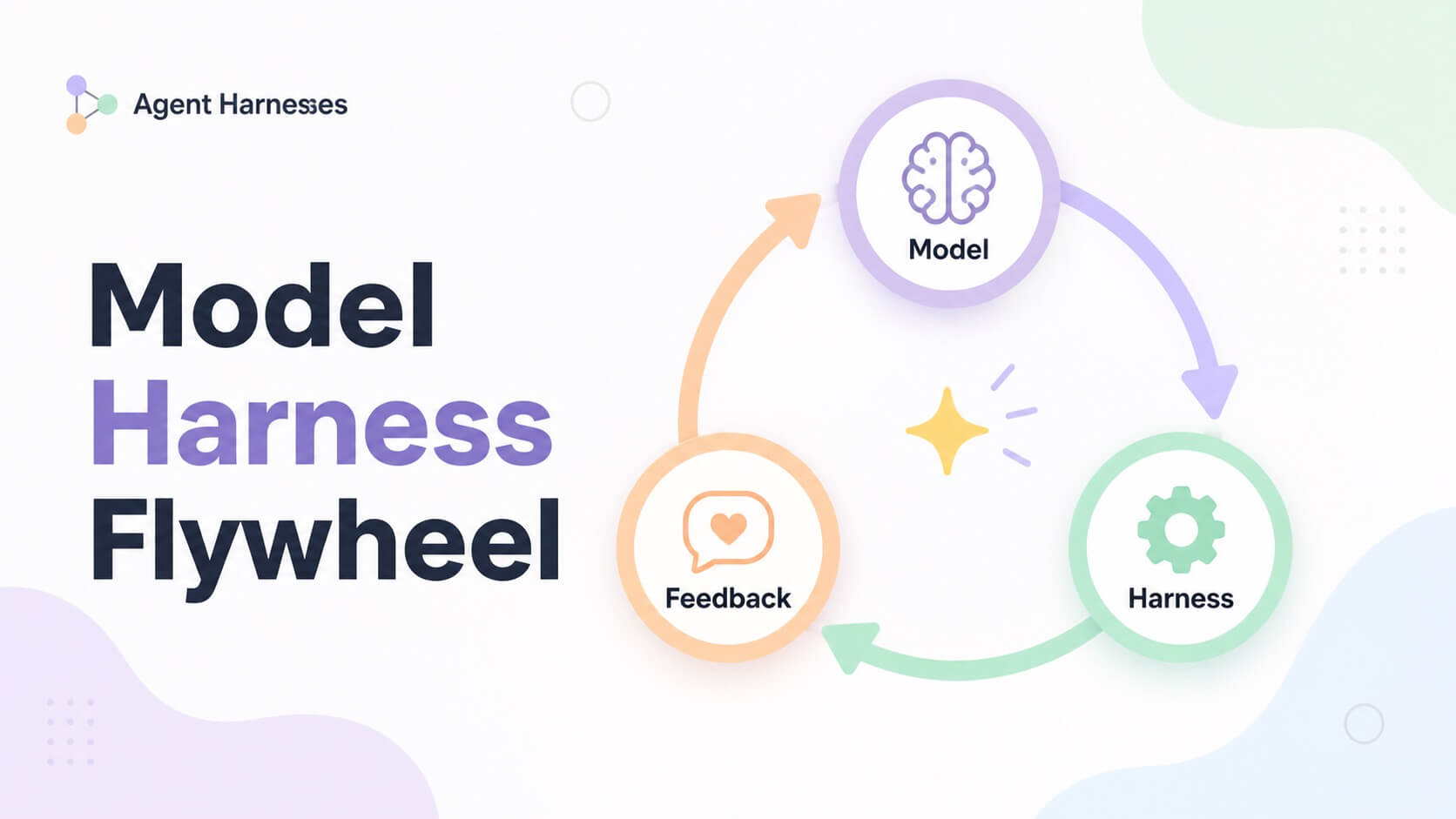

The flywheel

Every real-world agentic deployment generates data. When a developer uses Claude Code, every file read, bash command, tool call, and multi-step reasoning sequence leaves a trace. The model decided to use a tool. The tool ran. The task succeeded or failed. That perception-reasoning-action trace is raw training signal.

Anthropic collects that signal. OpenAI collects the equivalent from Codex. They feed it into training loops: RLHF (reinforcement learning from human feedback) or its AI-critic variant, RLAIF. Human raters or AI evaluators assess whether the agent made good decisions. Did it pick the right tools? Was the tool call well-formed? Did the action sequence make sense for the task? The rated data trains the next model version to produce better decisions in that specific harness.

The loop:

Users run agents in the harness

|

v

Traces collected (tool calls, outcomes, sequences)

|

v

Traces rated (human or AI critics)

|

v

Model trained on preference data

|

v

Model performs better inside that harness

|

v

Better performance attracts more users

|

v

More usage, more traces

|

v

RepeatThis is not speculation. OpenAI's Codex announcement (May 2025) says explicitly that codex-1 is fine-tuned from o3, trained with RL on real-world coding tasks across many development environments, with the goal of producing code that matches human style and PR conventions. Not just technically correct code. Style and convention. That is harness-specific training.

Anthropic's constitutional AI framework uses AI critics to evaluate outputs against principles at scale. The January 2026 update to their constitutional principles signals this framework is actively iterated, not static.

The result: these models are not learning to "use tools well" in a general sense. They're learning to use these specific tools, in these specific schemas, with these specific permission patterns. Claude is being trained to be excellent inside Claude Code. Codex is being trained to be excellent inside Codex's harness. The model and the harness are co-designed and co-trained.

What the numbers actually measure

The 87% vs 74.8% delta from Claude Opus 4.5's system card deserves a closer read.

When Claude Opus 4.5 acts as orchestrator with Claude Haiku 4.5 as subagents, it scores 87.0% on SWE-bench Verified. Opus 4.5 running alone scores 74.8%. When Claude Sonnet 4.5 orchestrates Haiku 4.5, the score is 85.4%. When Sonnet 4.5 acts as its own orchestrator, it falls to 66.5%.

That 18.9 percentage point gap between Sonnet-orchestrating-others and Sonnet-orchestrating-itself is the tell. Orchestration capability is something the model has been specifically trained for. It's not a general reasoning skill that transfers automatically. It's a harness-specific behavior.

The GPT-5.5 data is sharper. Endor Labs tested GPT-5.5 in two different harnesses. In OpenAI's native Codex harness, it scored 61.5% on a functionality benchmark. In Cursor's harness, with identical weights, it scored 87.2%. That's a 25.7 percentage point swing from changing the harness around the same model.

Cursor's explanation holds up: Cursor's entire product is the harness. OpenAI has a much larger product surface to maintain. Cursor has iterated on harness quality longer and more intensively. More engineering time in the harness gets you more performance out of the same model.

Anthropic has separately claimed their custom harness contributes roughly 10 percentage points to SWE-bench scores, independent of the model. That acknowledgment confirms the dual evaluation problem: you cannot read a SWE-bench score and attribute it cleanly to model quality.

Why SWE-bench is not a model comparison

SWE-bench Verified measures agent performance. The score is a combined function of the underlying model, the harness design, the co-training between them, and whether the model has seen the test data before.

These variables are not separated in any public leaderboard.

In February 2026, OpenAI's Frontier Evals team stopped reporting SWE-bench Verified scores. Their audit found over 60% of the benchmark's problems were unsolvable due to broken tests. More critically: every frontier model they tested, including GPT-5.2, Claude Opus 4.5, and Gemini 3 Flash, could reproduce verbatim gold patches for certain tasks. The benchmark problems come from open-source repositories. Those repositories are in training data. The models have memorized the answers.

Research published as "The SWE-Bench Illusion" (arXiv: 2506.12286) documents this directly. Scores are inflated by instance-specific and repository-level memorization. Analysis using PatchDiff found that 7.8% of patches counted as "passed" actually fail additional tests, causing up to 4.5 percentage point overestimation across the board. SWE-Rebench, a decontaminated version using post-cutoff tasks, shows certain models with disproportionately large score drops, consistent with training data overlap.

SWE-bench Pro, developed by Scale AI, takes direct aim at this. Tasks are split across public repositories, held-out private GPL repositories, and commercial codebases. The commercial tier is never publicly released, making memorization ineffective. Models that scored 80% on SWE-bench Verified scored roughly 23% on SWE-bench Pro.

That gap between 80% and 23% is what harness effects, memorization, and benchmark contamination were contributing to the number you were calling model quality.

For a model-only benchmark to be valid, you would need a standardized harness used by all evaluators, tasks created after all training cutoffs, tool schemas that appear in no training data, and genuine generalization requirements. That benchmark does not exist in widespread use. When you see a leaderboard, you're looking at agents, not models.

The lock-in that won't show up on an invoice

Standard vendor lock-in is visible. API pricing, migration costs, proprietary integrations. The lock-in created by co-training is invisible. It doesn't appear on any pricing page, and it compounds quietly over time.

Here's how it accumulates.

Your team builds extensively on Claude Code over 12 to 18 months. Anthropic collects traces from your usage patterns. Not your individual API calls in isolation. Aggregate signal about what Claude Code users do: the file structures they work with, the tool call sequences that complete tasks successfully, the edge cases where the model loses the thread. All of that feeds the next training run.

The next Claude generation is better at the workflows that match Claude Code's design. Your team gets more efficient at using Claude Code. Claude gets better at the things Claude Code users do. The alignment between model and harness deepens every training cycle.

If you switch to a competitor model, the new model hasn't been trained on Claude Code's interaction patterns. You lose that accumulated alignment. The competitor might be excellent in absolute terms. In your harness, with your workflows, it starts from zero on the co-training curve.

The Anthropic postmortem from April 2026 makes this dependency concrete. Users reported widespread performance degradation. The model weights hadn't changed. Three harness modifications had been deployed at different times, to different traffic slices: changes to the system prompt, changes to caching logic, changes to the reasoning effort setting. Together they produced what looked like broad, inconsistent degradation. A single line in a system prompt, a caching policy, a default reasoning depth: any one of these can fundamentally alter user experience when the model's training has been calibrated to a specific harness configuration.

The switching cost is not the migration engineering. It's the co-training alignment you leave behind.

| Factor | Provider-locked (Claude Code, Codex) | Open (OpenCode, custom harness) |

|---|---|---|

| Co-training benefit | Accumulates automatically | Requires your own investment |

| Switching cost | Grows with usage | Self-determined |

| Data ownership | Provider's | Yours |

| Lock-in transparency | Not visible on invoices | Explicit |

| 3-year competitive position | Dependent on provider trajectory | Dependent on your own flywheel |

The lock-in runs both directions. Anthropic is also locked into serving Claude Code users well, because that's where the next generation's training data comes from. Users generate the data. The lab generates the alignment. The loop reinforces both sides.

What open-weight teams are missing

Llama 4 and DeepSeek V4-Pro are serious models. DeepSeek V4-Pro hits 80.6% on SWE-bench Verified, within 0.2 points of Claude Opus 4.6, under an MIT license.

Neither has a first-party agentic harness with a co-training loop. Meta does not run a Claude Code equivalent. DeepSeek does not operate a hosted agentic product generating real deployment traces at scale. Teams building on these models don't get a provider-run flywheel. The model cannot improve from their deployment data unless they run their own training.

Every training cycle that Anthropic and OpenAI complete, using real traces from millions of developers, is a cycle that open-weight teams are not running. The gap between harness-optimized and general-purpose models can widen with each iteration.

The AgentRL research (THUDM, arXiv: 2510.04206) shows what's possible. RL-trained open LLMs (Qwen2.5, GLM-4-9B) in a matched multi-turn harness significantly outperform GPT-5, Claude Sonnet 4, and DeepSeek-R1 on agentic tasks. A single multi-task trained model matches the performance of five separately trained task-specific models and generalizes to unseen tasks.

The phrase to pay attention to is "matched harness." The training environment must replicate the deployment environment. That's the condition for the gains to show up.

Open-weight teams also have a genuine structural advantage. Deployment logs stay in their own infrastructure. No vendor pricing risk. Custom fine-tuning against proprietary data is possible in a way that provider-locked teams can't access.

The infrastructure to do this exists. OpenRLHF (arXiv: 2405.11143) runs on Ray and vLLM, supports models up to 70B and above, and implements PPO, GRPO, REINFORCE++, and DAPO. One operational note: RLHF training spends roughly 80% of compute time on sample generation, not gradient updates. vLLM integration is critical for throughput. verl (HybridFlow) is an alternative with native multi-turn agentic RL support.

What a private flywheel actually costs

For large enterprises (1,000+ engineers, significant AI deployment), this is technically feasible today. A private co-training loop needs deployment infrastructure with full trace capture (every session logged, tool calls, outcomes, metadata tagged by task type and harness version), a preference data pipeline (human annotators at $50,000 to $200,000 annually per specialized domain, or RLAIF using AI critics at lower cost), and GPU infrastructure for training.

RLHF requires an actor model, reward model, reference model, and critic model running simultaneously. A minimum viable cluster is 8x H100 at roughly $200K in hardware plus cloud spot instances for burst training. First-year all-in: $500K to $2M including hardware, annotation, and MLOps talent.

ZhipuAI is already doing this. AgentRL is deployed in their AutoGLM product.

For mid-sized teams (50 to 500 engineers), the practical path skips full RLHF. Outcome-based rewards (did the agent complete the task?) cut human annotation requirements significantly. Fine-tuning with DPO rather than full PPO reduces infrastructure requirements further. LoRA on cloud spot instances brings compute cost down considerably. Realistic timeline: first meaningful fine-tuning cycle by Q3/Q4 2026, an operational flywheel by 2027.

For smaller teams, a full RLHF loop isn't viable. Prompt engineering, few-shot examples, and smaller-scale supervised fine-tuning are the realistic options. The flywheel advantage is asymmetrically available to teams with scale.

| Factor | Build private flywheel | Use provider harness |

|---|---|---|

| Startup cost | High ($500K to $2M) | Low (API pricing) |

| Data ownership | Full | None or limited |

| Lock-in | Self-determined | Grows with usage |

| Alignment quality | Scales with your data volume | Scales with all provider users globally |

| Speed to first improvement | 6 to 12 months | Immediate and ongoing |

| 3-year position | Strong if you reach scale | Dependent on provider |

The multi-agent dimension

The flywheel gets deeper when agents work in teams, and the research here is genuinely surprising.

Stronger-MAS (arXiv: 2510.11062, ICLR 2026) introduces AT-GRPO, an algorithm that extends standard GRPO to multi-agent systems. Standard GRPO breaks in multi-agent settings because prompts vary by role and by turn. AT-GRPO handles that variance properly.

On long-horizon planning tasks, single-agent RL baselines achieve between 14% and 47% accuracy. The same models trained with AT-GRPO in a matched multi-agent harness achieve 96% to 99.5%. Coding tasks improve by 3.87 to 7.62 percentage points. Math tasks improve by 9 to 17.93 percentage points.

The condition: these gains only hold when the training setup matches the deployment setup. Agents trained to collaborate in a specific multi-agent architecture, with specific role assignments, communication patterns, and tool access per role, perform well in that architecture. Train them for solo work and deploy them in a multi-agent harness: you don't get the same numbers.

The May 2026 paper on RL for multi-agent orchestration (arXiv: 2605.02801) formalizes this. Orchestration decisions (when to spawn subagents, whom to delegate to, how to communicate, how to aggregate results, when to stop) are trained behaviors, not emergent ones. The paper directly connects this framework to Kimi Agent Swarm, OpenAI Codex, and Anthropic Claude Code as evidence of orchestration trace-based training at production scale.

What this means strategically: it's not just individual model behavior that becomes harness-specific. Orchestration patterns, delegation decisions, and inter-agent communication are all part of it. A team switching away from a provider loses alignment at both levels.

The wrong harness penalty

The inverse of the flywheel is measurable too. Put a model in a harness it wasn't trained for and performance drops. Put it in a harness that's better designed than its native one and you can exceed native performance.

The GPT-5.5 result is the clearest example of the second case. 61.5% in Codex, 87.2% in Cursor. Cursor has invested more engineering depth in harness quality because the harness is their entire product. GPT-5.5 in a better-designed harness outperforms itself in its native one.

For teams running multi-model architectures, there's a subtler version of this. When models switch harnesses mid-task, one model hands off to another that must apply tools to conversation history produced by a different model, which is out of distribution from its training. Mitigation approaches exist: custom instructions at handoff points, steering the receiving model away from tools that appear in history but aren't in its current tool set, re-anchoring context explicitly. Most teams aren't doing this yet.

The Meta-Harness paper (arXiv: 2603.28052, Stanford, March 2026) quantifies how much headroom harness optimization leaves. Their system improves over state-of-the-art context management by 7.7 points while using 4x fewer tokens. That's harness work, not model improvement, producing gains a model upgrade might not.

A harness upgrade can produce a larger performance gain than a model upgrade. Teams that upgrade their model but not their harness may see no improvement, or regression.

What this means for platform decisions

Benchmark comparisons are not model comparisons. When you see Claude Code vs. Codex on a leaderboard, you're seeing two agents, two harnesses, two separate training loops. You cannot cleanly attribute score differences to model quality without controlling for harness effects. Nobody's public benchmark currently does that.

Switching costs compound invisibly. Every month of usage on a provider's harness increases the marginal value of staying versus switching. That cost doesn't appear anywhere on a pricing page. Model a 3-year scenario: by 2028, Claude models trained on three years of real Claude Code session data will have behavioral alignment to Claude Code's tool schemas, permission patterns, and user workflows that is deeply ingrained. A competitor model in 2028, even a stronger one by raw capability, starts that alignment from zero.

Open-weight models can close the gap, but only with matched training. The AgentRL result is not theoretical. Smaller open-weight models, trained with RL in a matched harness, outperform much larger closed models on agentic tasks. The requirement is investment: the infrastructure, the annotation pipeline, the iteration cadence. For teams with the scale to run this, a private flywheel is a viable path. For teams without it, provider co-training is the default.

The harness is a strategic asset. If your team's harness generates unique, high-quality deployment traces, those traces compound over time. The harness design decisions you make today are shaping your team's AI performance in 2027.

The providers who understood this earliest are running the flywheel at scale. The teams that understand it now can build their own, choose providers whose flywheel benefits them, or accept that the alignment advantage will belong to whoever controls the harness.

Other posts in this series

- Agent Harnesses: The Hidden Layer That Actually Runs Your AI (start here if you haven't read the pillar post)

- Harness failure modes: infinite loops, context overflow, prompt injection, hallucinated tool calls

- Security in harness design: sandboxing, least-privilege tools, prompt injection defenses

- Observability: logging and tracing your harness

- Cost at scale: why a 1,000-token system prompt is worth engineering for

- Multi-modal harnesses: images, PDFs, audio

- Evaluation pipelines: how to test your harness, not just your model

- The AGENTS.md and CLAUDE.md pattern

- A2A protocol: inter-harness agent communication

- When not to use an agent harness

References

Research papers

- Stronger-MAS: Multi-Agent Reinforcement Learning for Collaborative LLMs

Zhao, Hu, Wang, Hou, Zhang, Ding, Zhao. ICLR 2026.

arXiv: 2510.11062. https://arxiv.org/abs/2510.11062 - AgentRL: Scaling Agentic Reinforcement Learning with a Multi-Turn, Multi-Task Framework

Zhang, Liu, Lv, et al. (THUDM/Tsinghua). October 2025.

arXiv: 2510.04206. https://arxiv.org/abs/2510.04206 - End-to-End Optimization of Model Harnesses (Meta-Harness)

Lee, Nair, Zhang, Lee, Khattab, Finn. Stanford. March 2026.

arXiv: 2603.28052. https://arxiv.org/abs/2603.28052 - Reinforcement Learning for LLM-based Multi-Agent Systems through Orchestration Traces

Chenchen Zhang. May 2026.

arXiv: 2605.02801. https://arxiv.org/abs/2605.02801 - OpenRLHF: An Easy-to-use, Scalable and High-performance RLHF Framework

arXiv: 2405.11143. https://arxiv.org/abs/2405.11143

GitHub: https://github.com/OpenRLHF/OpenRLHF - The SWE-Bench Illusion: When State-of-the-Art LLMs Remember Instead of Reason

arXiv: 2506.12286. https://arxiv.org/html/2506.12286v3 - Constitutional AI: Harmlessness from AI Feedback

arXiv: 2212.08073. https://arxiv.org/abs/2212.08073

Official announcements and system cards

- Claude Opus 4.5 System Card (Anthropic, November 2025)

https://assets.anthropic.com/m/64823ba7485345a7/Claude-Opus-4-5-System-Card.pdf - Introducing Codex (OpenAI, May 2025)

https://openai.com/index/introducing-codex/ - Harness Engineering (OpenAI)

https://openai.com/index/harness-engineering/ - Why We No Longer Evaluate SWE-bench Verified (OpenAI, February 2026)

https://openai.com/index/why-we-no-longer-evaluate-swe-bench-verified/ - Claude's Constitution (Anthropic, January 2026 update)

https://www.anthropic.com/news/claudes-constitution

Benchmarks

- SWE-bench Leaderboard: https://www.swebench.com/

- SWE-bench Verified: https://llm-stats.com/benchmarks/swe-bench-verified

- SWE-bench Pro: https://labs.scale.com/leaderboard/swe_bench_pro_public

Industry analysis

- GPT-5.5 Cursor vs. Codex Harness Benchmark (Endor Labs / MindStudio, 2026)

https://www.mindstudio.ai/blog/cursor-sdk-gpt-5-5-vs-native-codex-harness-endor-labs-benchmark - Agent Harnesses Beat Model Upgrades: 5 Benchmarks (MindStudio, 2026)

https://www.mindstudio.ai/blog/agent-harnesses-beat-model-upgrades-5-benchmarks - Anthropic Claude Harness Postmortem (VentureBeat, April 2026)

https://venturebeat.com/technology/mystery-solved-anthropic-reveals-changes-to-claudes-harnesses-and-operating-instructions-likely-caused-degradation - The Real Reason Claude Got Dumber (Pebblous, 2026)

https://blog.pebblous.ai/blog/claude-harness-postmortem/en/ - AI Coding Flywheel Effect (MindStudio, 2026)

https://www.mindstudio.ai/blog/ai-coding-models-flywheel-effect - Anthropic Revenue and Claude Code Run Rate

$1B run rate: https://www.aicerts.ai/news/anthropics-specialized-llm-claude-code-reaches-1b-run-rate/

$44B annualized (April 2026): https://cybercorsairs.com/anthropics-revenue-math-is-staggering/ - Inside the Agent Harness (Jonathan Fulton, Medium, April 2026)

https://medium.com/jonathans-musings/inside-the-agent-harness-how-codex-and-claude-code-actually-work-63593e26c176 - Enterprise RLHF Implementation

https://cleverx.com/blog/enterprise-rlhf-implementation-checklist-complete-deployment-framework-for-production-systems - Reinforcement Learning Infrastructure

https://introl.com/blog/reinforcement-learning-infrastructure-rlhf-robotics-gpu-clusters-2025 - What Is an Agent Harness (MindStudio)

https://www.mindstudio.ai/blog/what-is-agent-harness-architecture-explained

Rahul Kashyap is CTO & Co-founder at Designare Solutions and DeepStory, based in Bangalore.